Build your own perpetual autonomous database backup machine from a Raspberry Pi for $10 and never pay for backups again

After my general post on backing up MongoDB I decided to up it a notch and build a fully autonomous backup machine. Just connect it to the Internet and see the magic happening.

Hello everybody! So I wrote a post on how to setup free backups for MongoDB. But then I thought that it was a bit too much equipment: you needed a USB thumb drive and an old laptop. And it hit me: I could do the same thing on a $10 Raspberry Pi Zero W if I just upload my backups to Google Drive directly!

So here's what we will need today:

- Raspberry Pi Zero W ($10)

- Micro USB cable (you already have one probably)

- USB charger (almost any will work with at least 1A)

- Micro SD card (again, you already have one collecting dust on a shelf, but the minimum is 4 GB)

All in all, you'll spend $10 and an hour of your time and you'll end up with a solution that autonomously backs up your databases (actually, not only MongoDB, any type of popular database would work) whenever plugged in to power. Also, don't get ripped off: Raspberry Pi Zero W does cost just $10, no more, no less. Don't buy it off Amazon for $40.

Sounds awesome? Let's begin.

Getting the Linux up and running

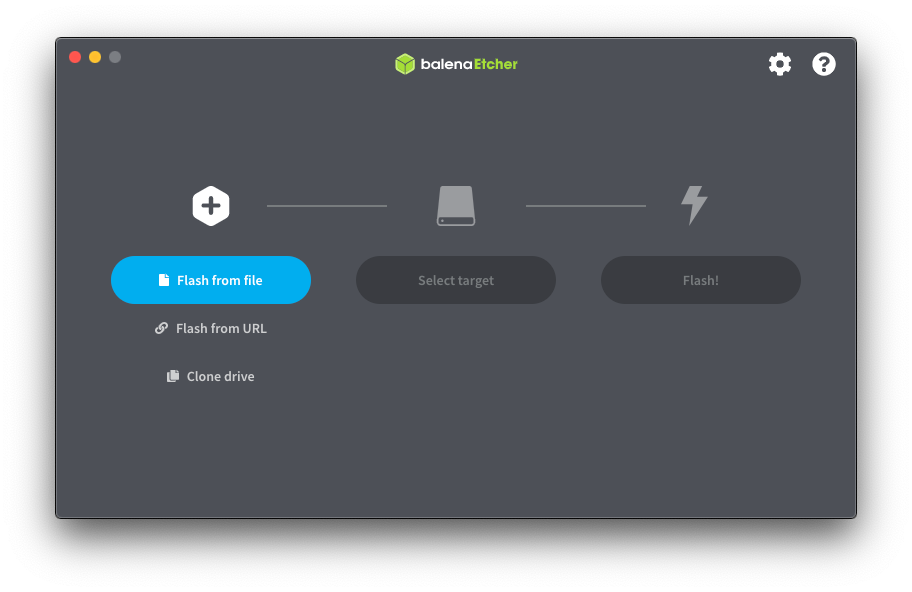

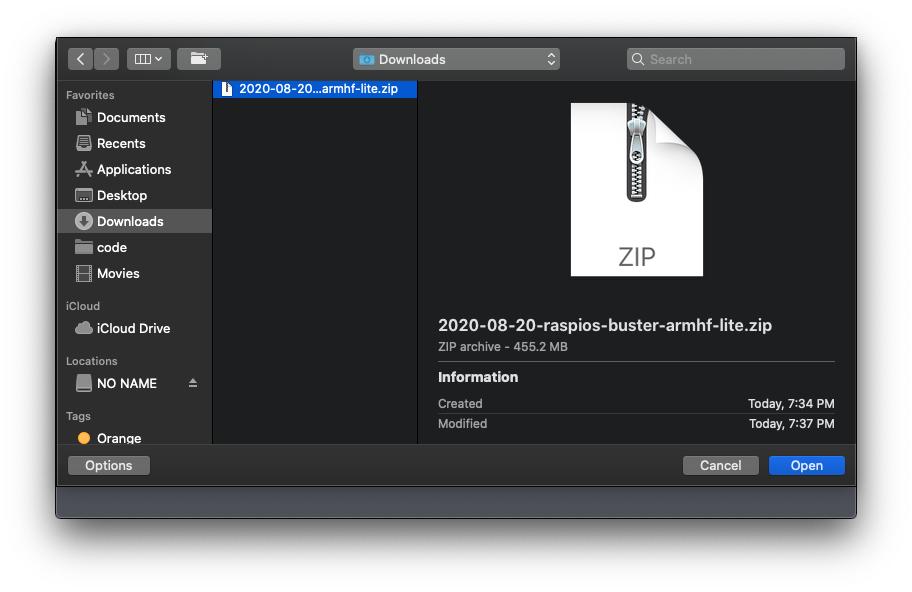

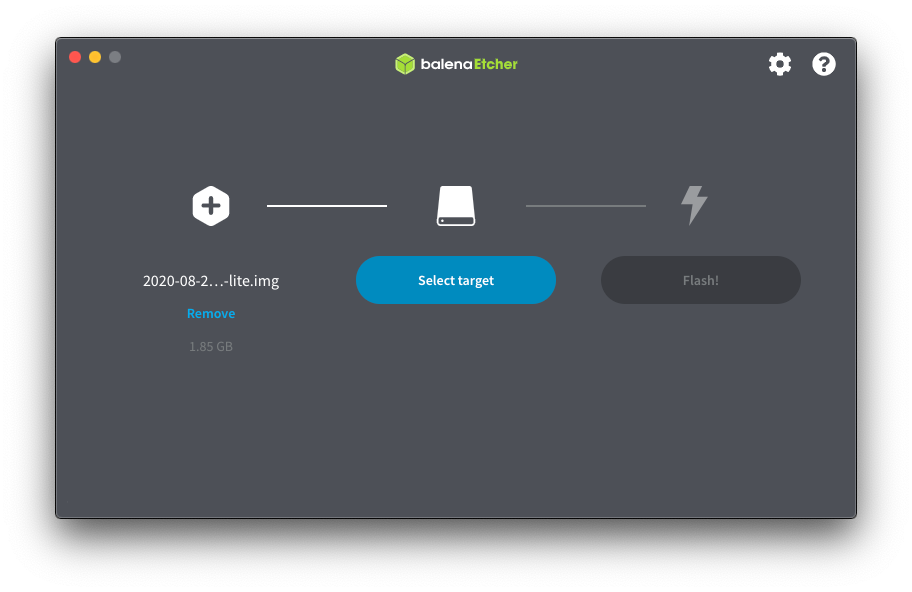

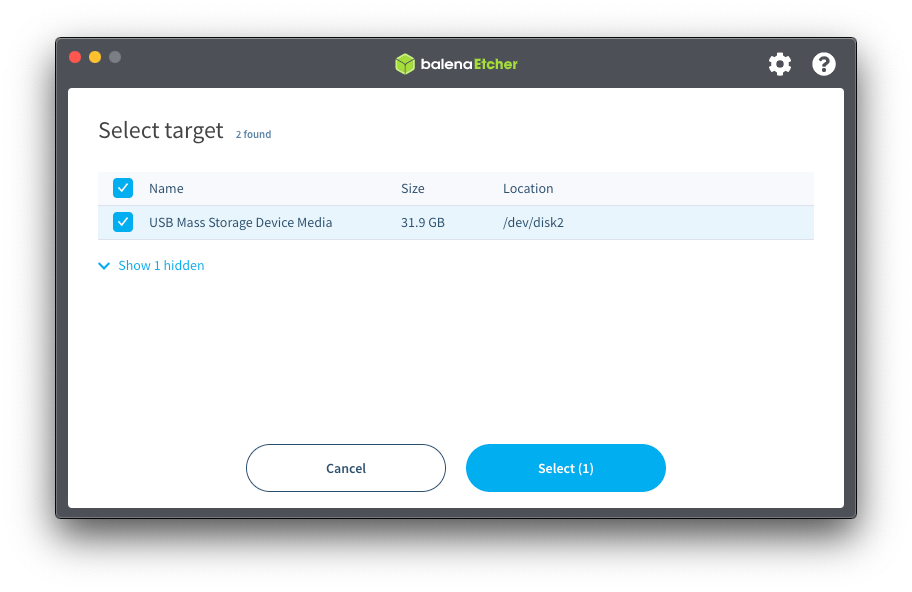

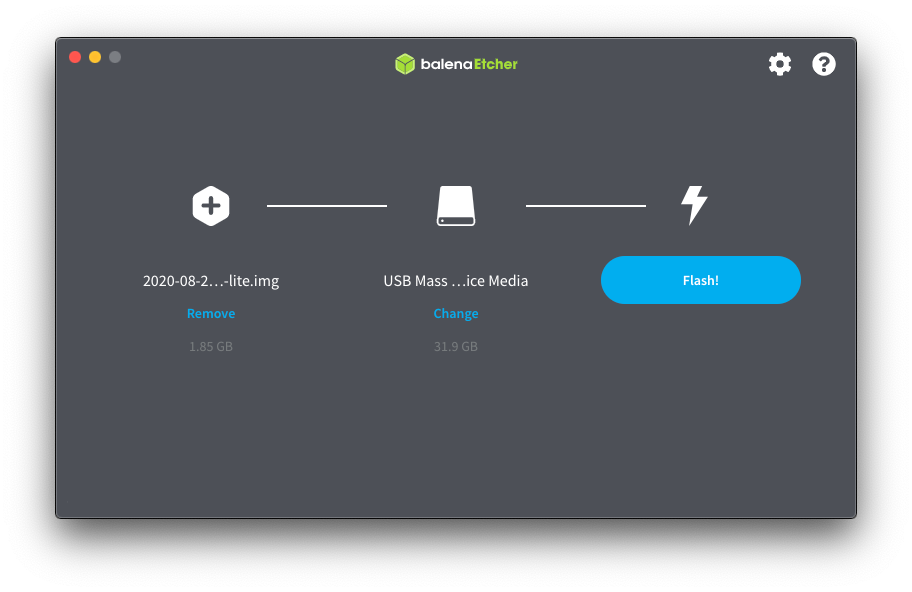

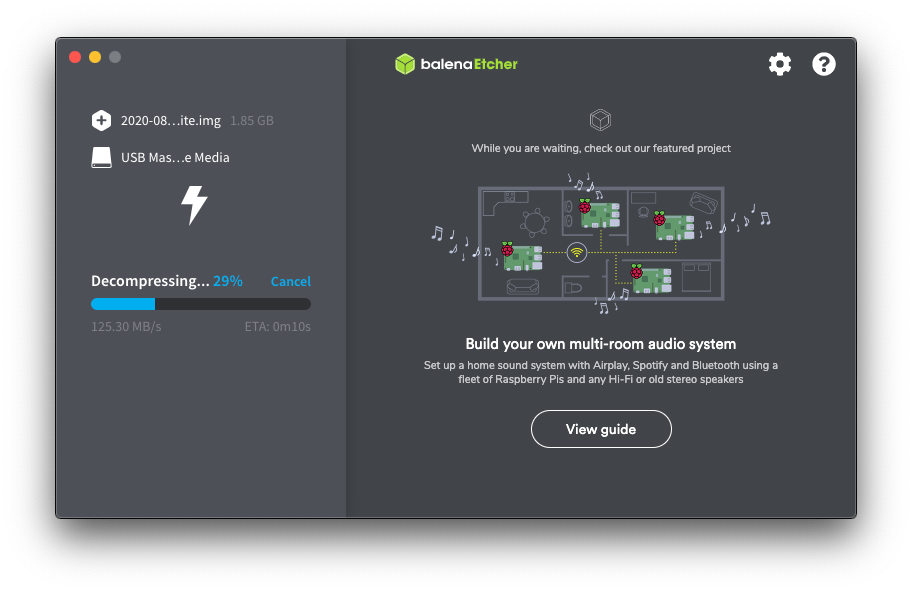

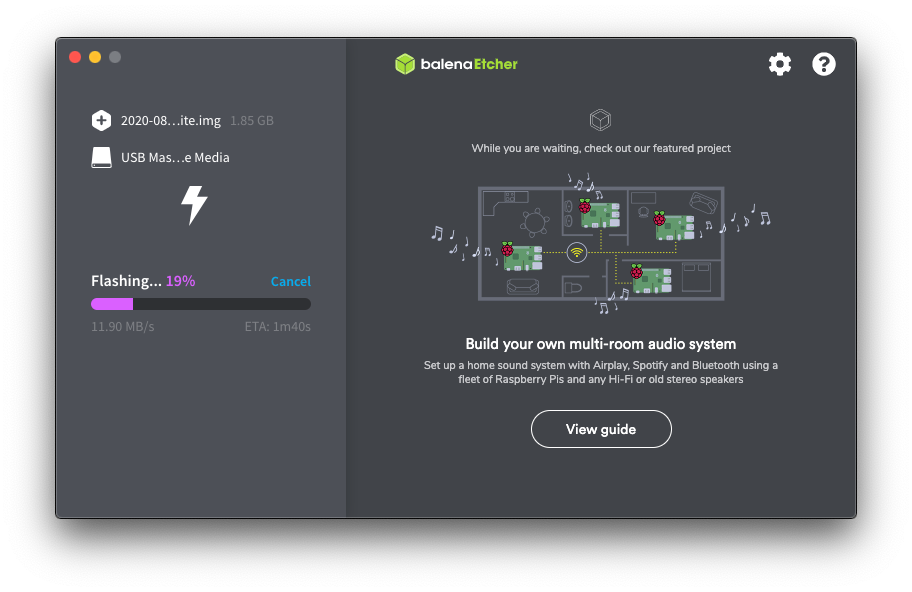

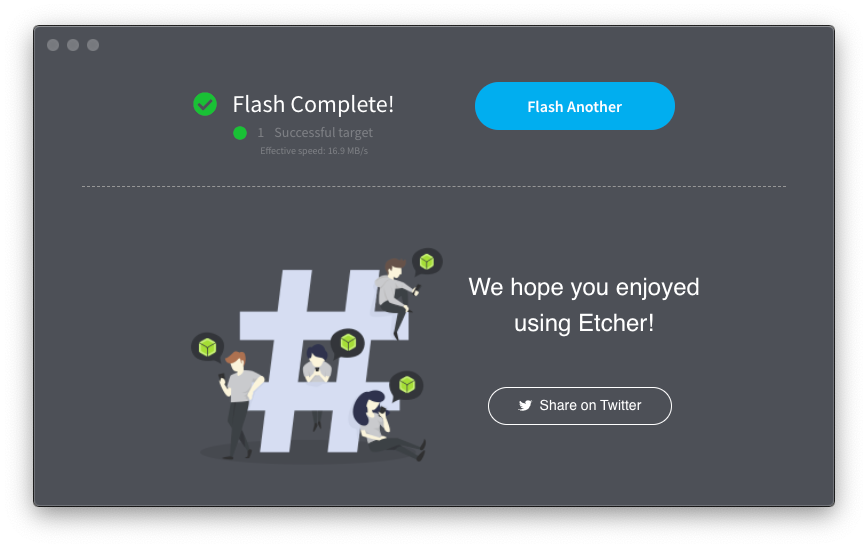

First, download Etcher and a lite Raspberry Pi OS image without desktop. Desktops?! Where we're going we don't need desktops!

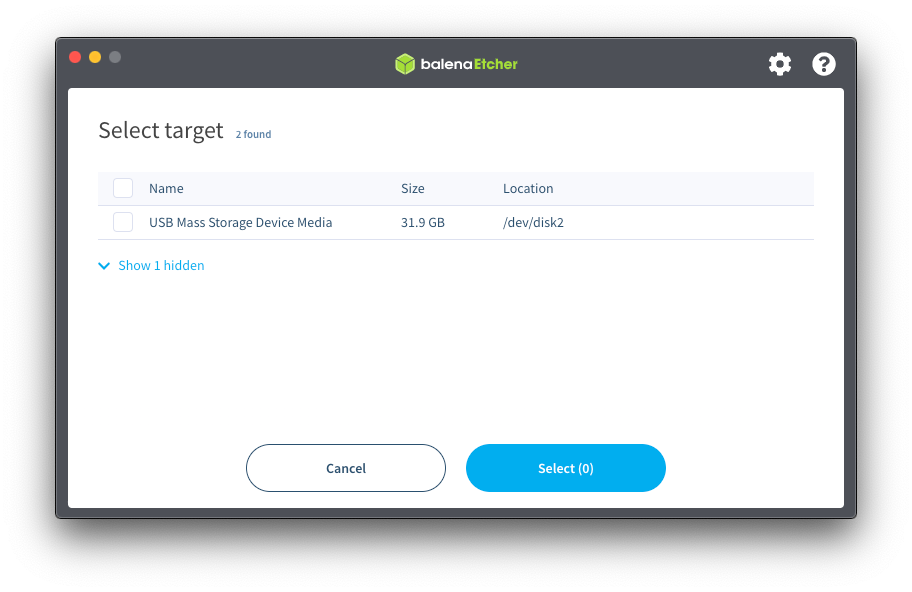

I'm running this software on a macOS, but you can do everything on other OS's as well. Connect your Micro SD card to your computer, launch the Etcher and flash your Micro SD with the Raspberry Pi OS image you've downloaded.

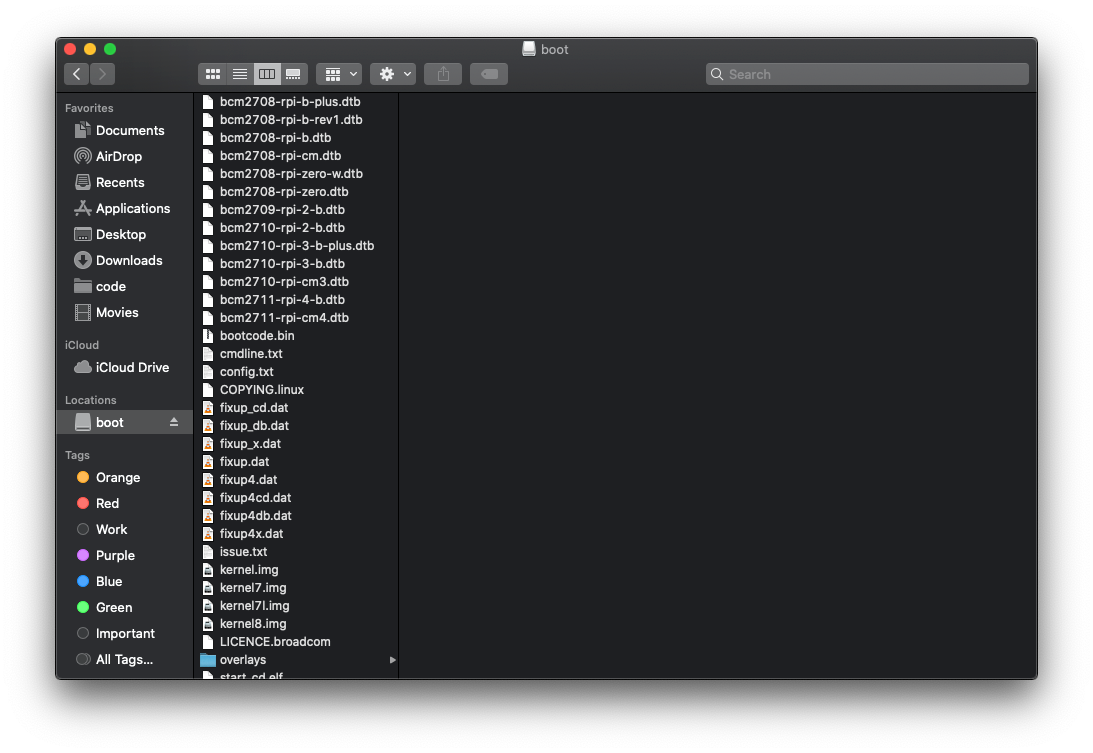

Now disconnect and connect back again the Micro SD card. You should be able to see the boot drive.

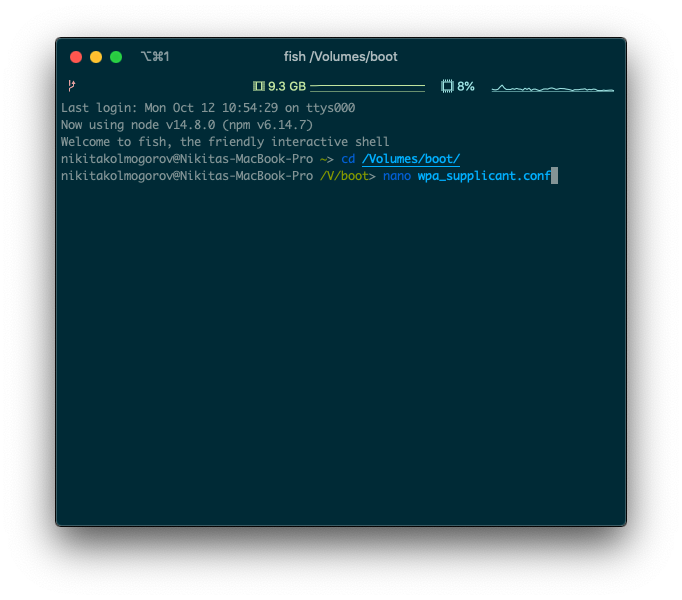

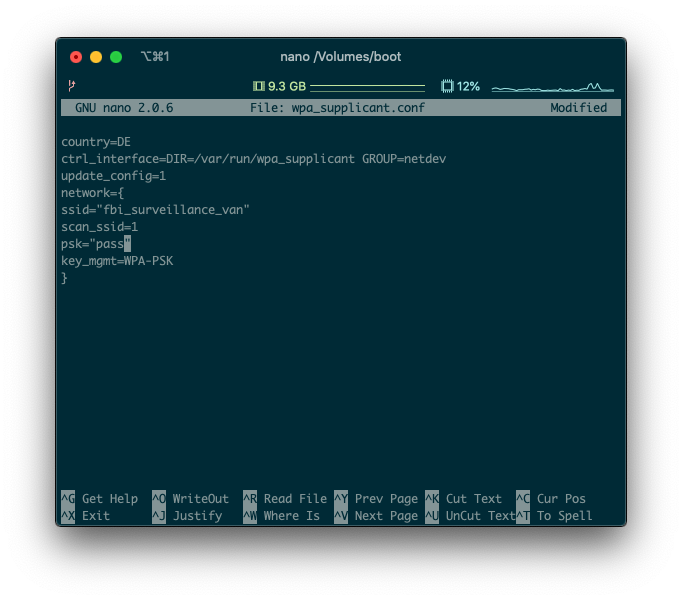

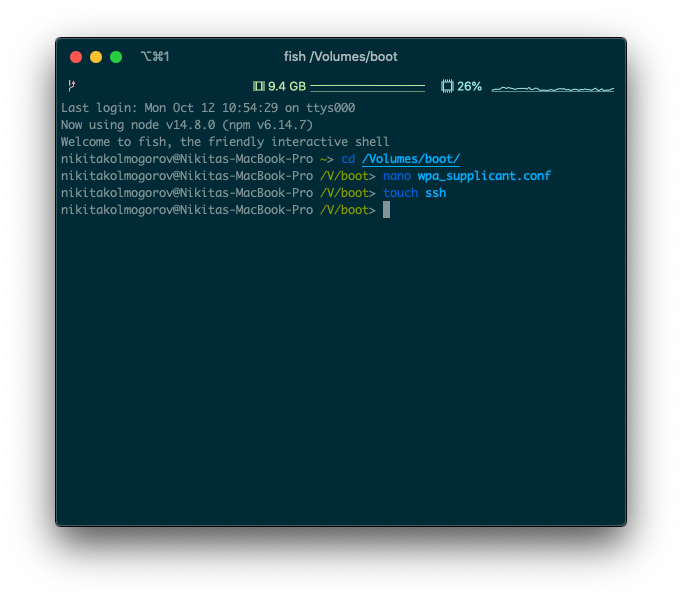

Let's enable Internet access. Make sure to create files with a terminal tool like nano. Conventional text editors for some reason don't add unix line endings. Create a file called wpa_supplicant.conf in the root directory of the thumbdrive with the following content:

country=DE

ctrl_interface=DIR=/var/run/wpa_supplicant GROUP=netdev

update_config=1

network={

ssid="my_network"

scan_ssid=1

psk="my_password"

key_mgmt=WPA-PSK

}

Obviously, swap my_network and my_password to the wireless network credentials. I usually use 2.4GHz wifi, haven't tried it with 5GHz. You can also change your country, but I have no idea if it affects anything.

Then let's enable SSH to manipulate the Pi from a terminal. Create an empty file at the root directory with the name ssh with the command touch ssh.

This is it! The setup is done. You can now insert the Micro SD card into your Pi and connect it to power! Make sure that you connect the MicroUSB cable to the "PWR" socket. It should take ~90 seconds for the first boot of your Pi (it is probably setting up something).

Connecting to the Pi

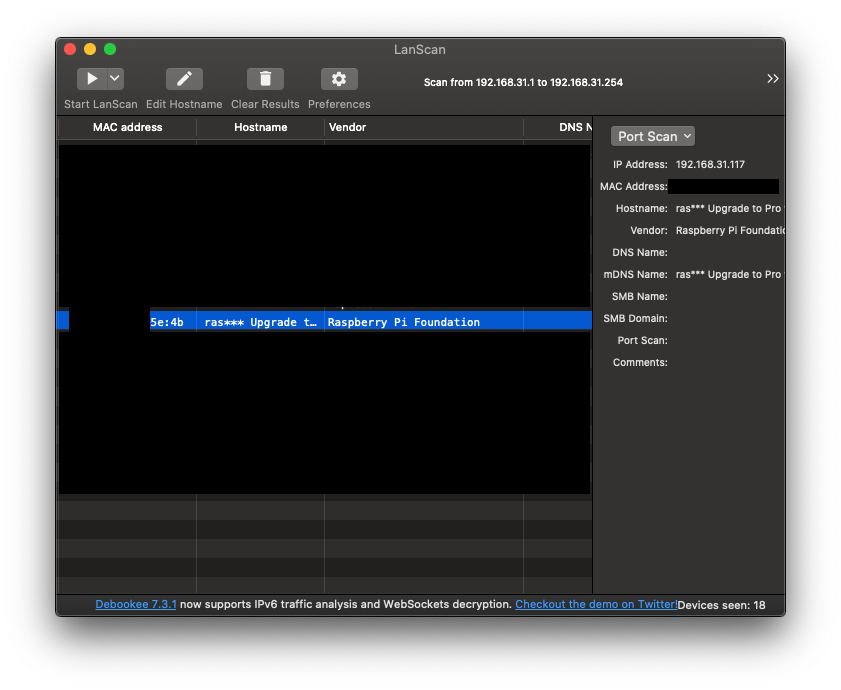

Now you need to find your Pi's IP address. You can use free LanScan tool for macOS or something like nmap on other platforms. The name of the device should be similar to "raspberry pi".

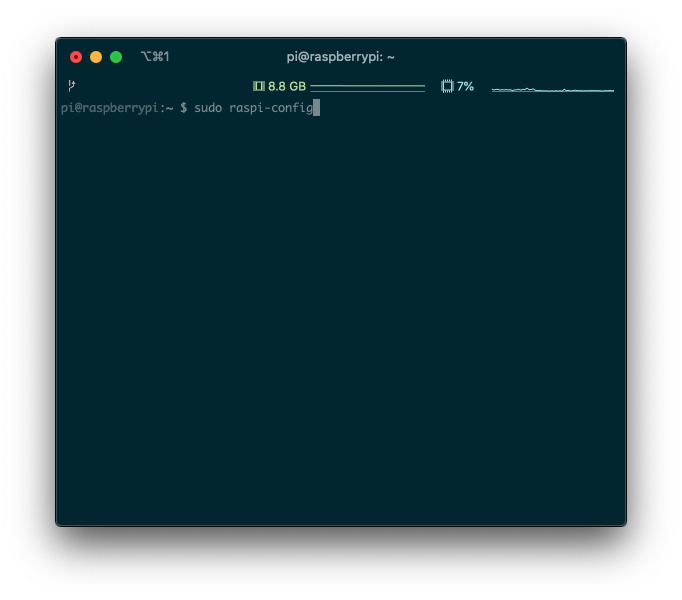

Now we can ssh into the raspberry pi! Connect to the pi user, the password is raspberry.

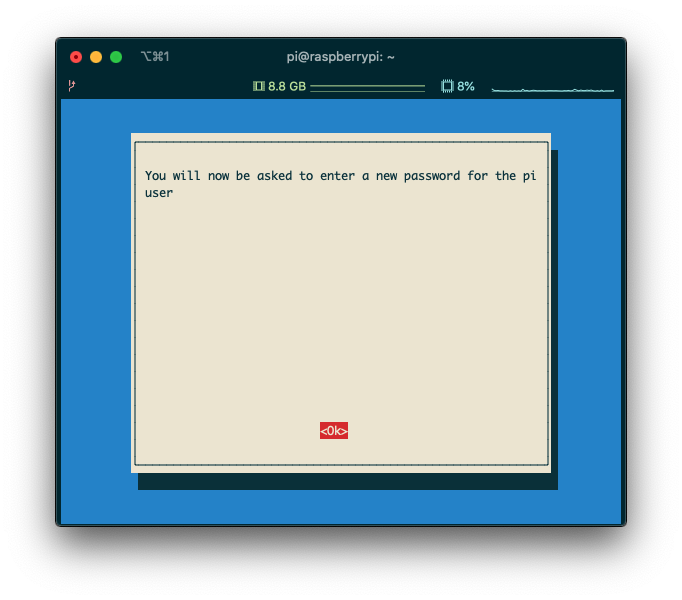

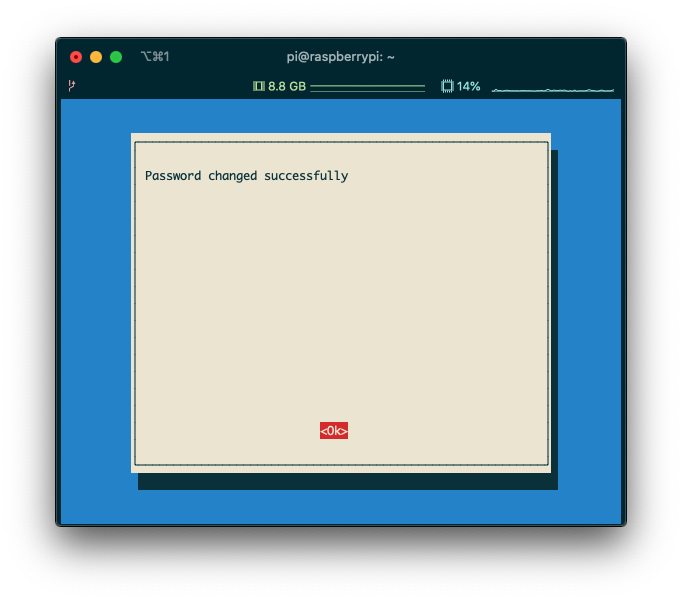

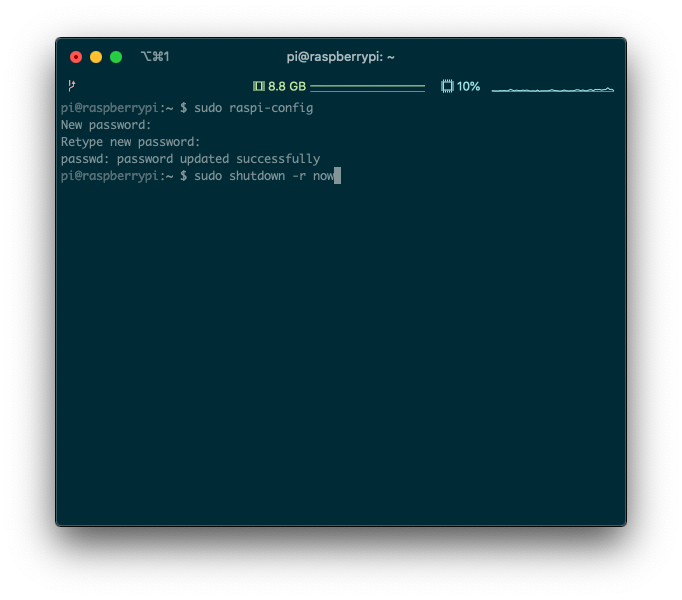

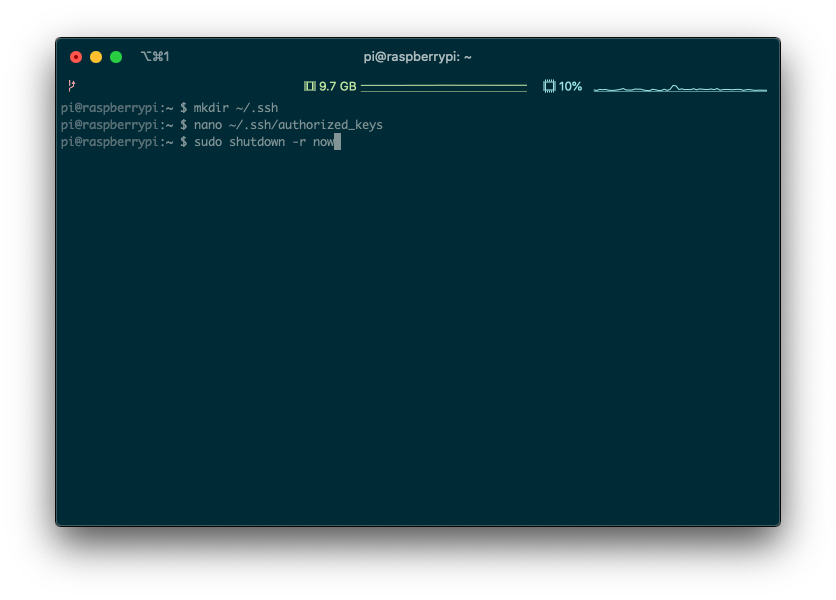

For the security reasons let's change the password running the sudo raspi-config command. Then reboot the Pi by running sudo shutdown -r now.

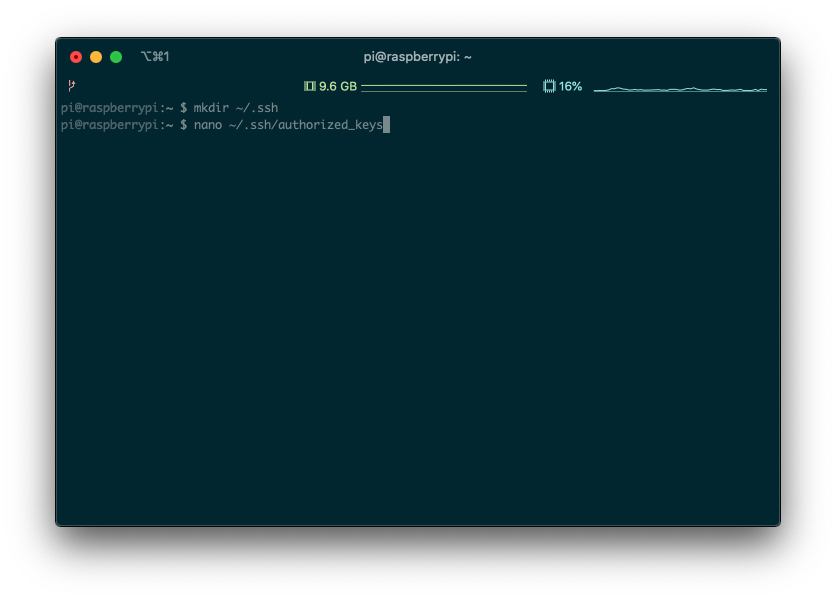

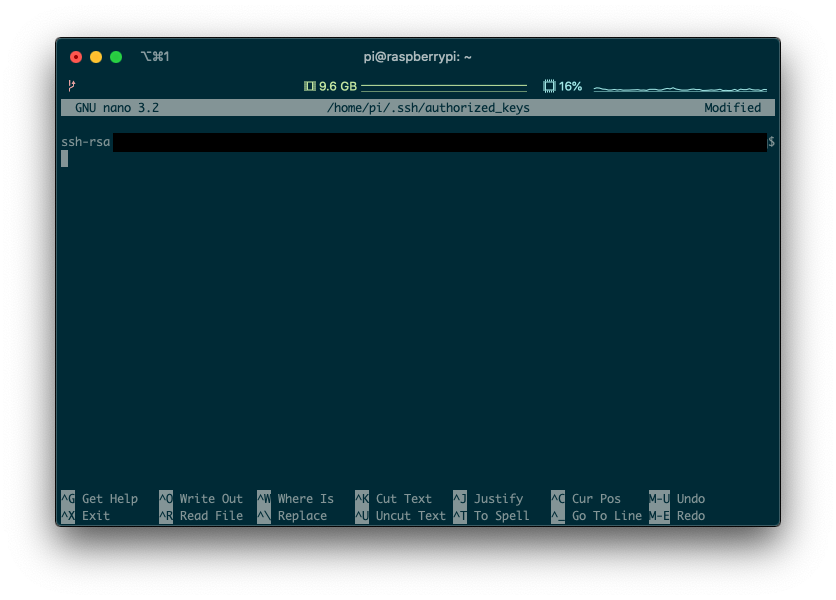

Leaving the password authentication should be good enough if you're going to leave the Pi not being accessible by the Internet. But if you want to be 100% secure, enable the ssh key authentication by creating ~/.ssh/authorized_keys file on your Pi with your ssh keys and then rebooting the device:

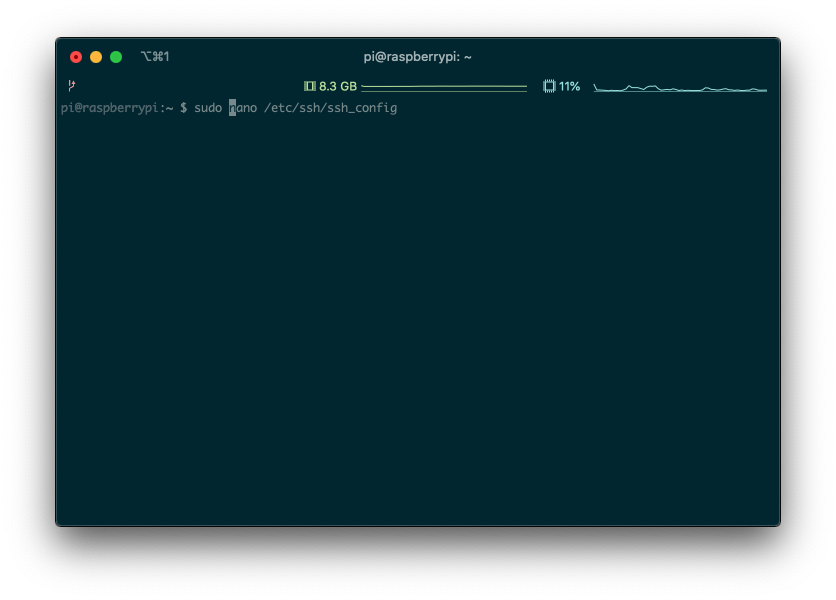

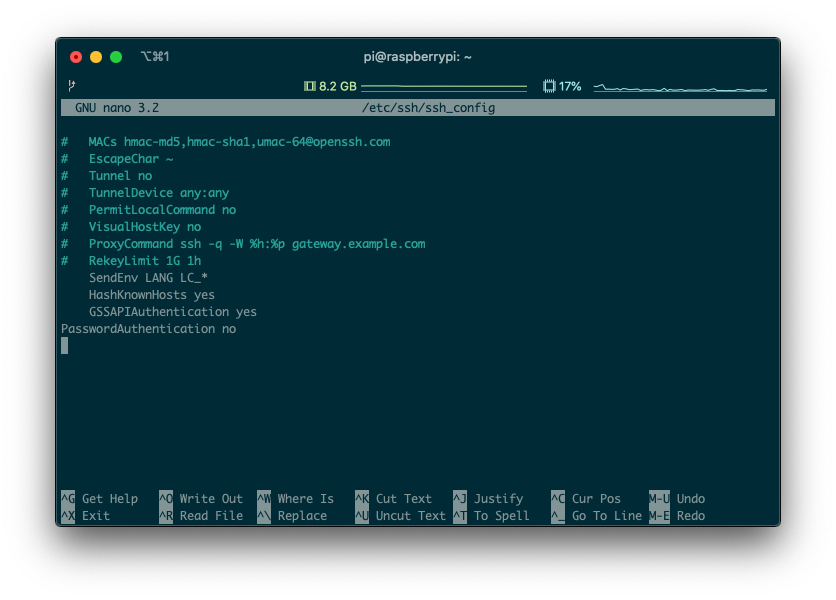

Oh, and don't forget to add PasswordAuthentication no to the /etc/ssh/ssh_config file:

Now we can connect to our Pi. Time to write the backup script!

The backup script dependencies

This script will do a mongodump of the database, upload it to Google Drive with a CLI tool called drive and then delete the local version of the files. As simple as that!

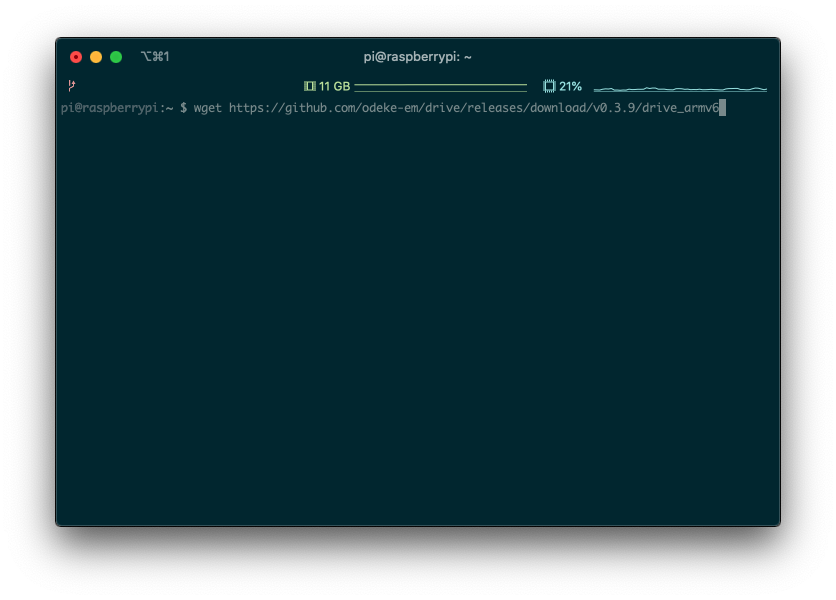

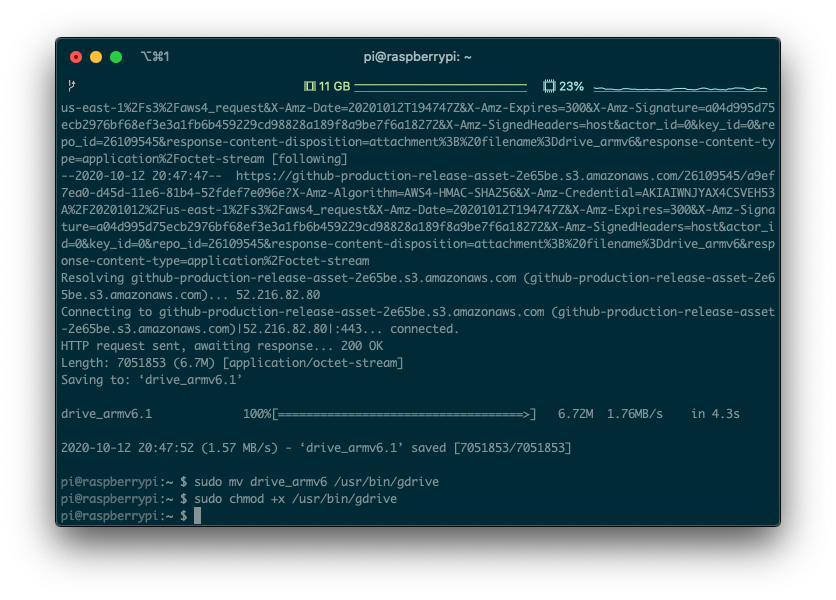

But first, install the drive CLI with the following commands:

wget https://github.com/odeke-em/drive/releases/download/v0.3.9/drive_armv6

sudo mv drive_armv6 /usr/bin/gdrive

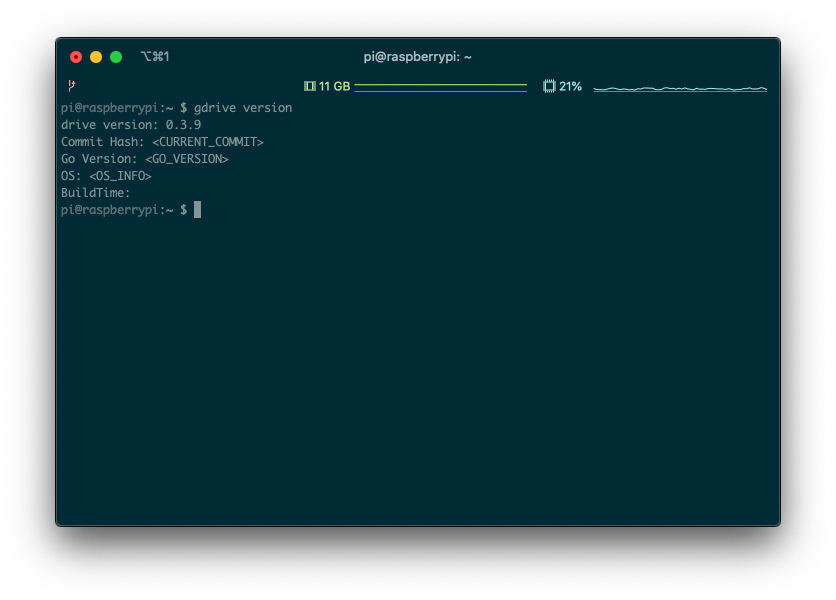

sudo chmod +x /usr/bin/gdriveNote the armv6 architecture. Then try to run gdrive version to check the installation.

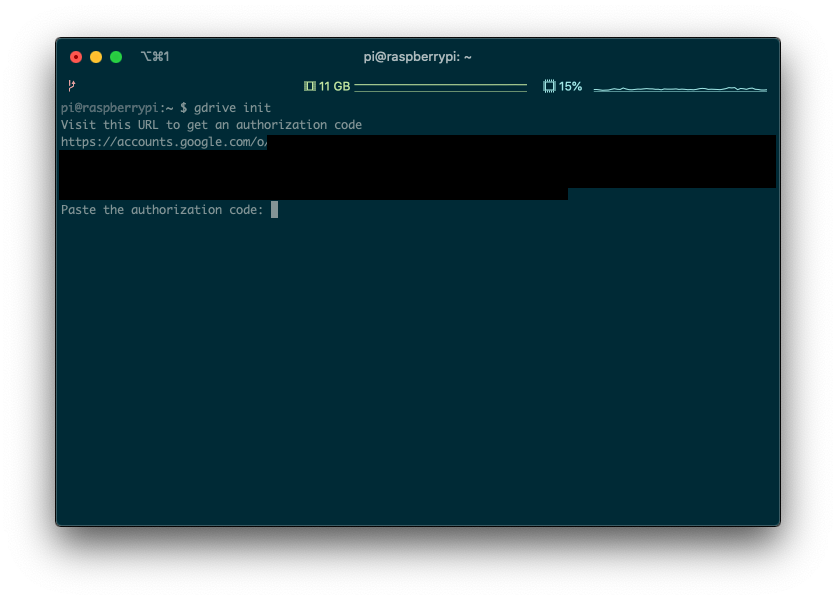

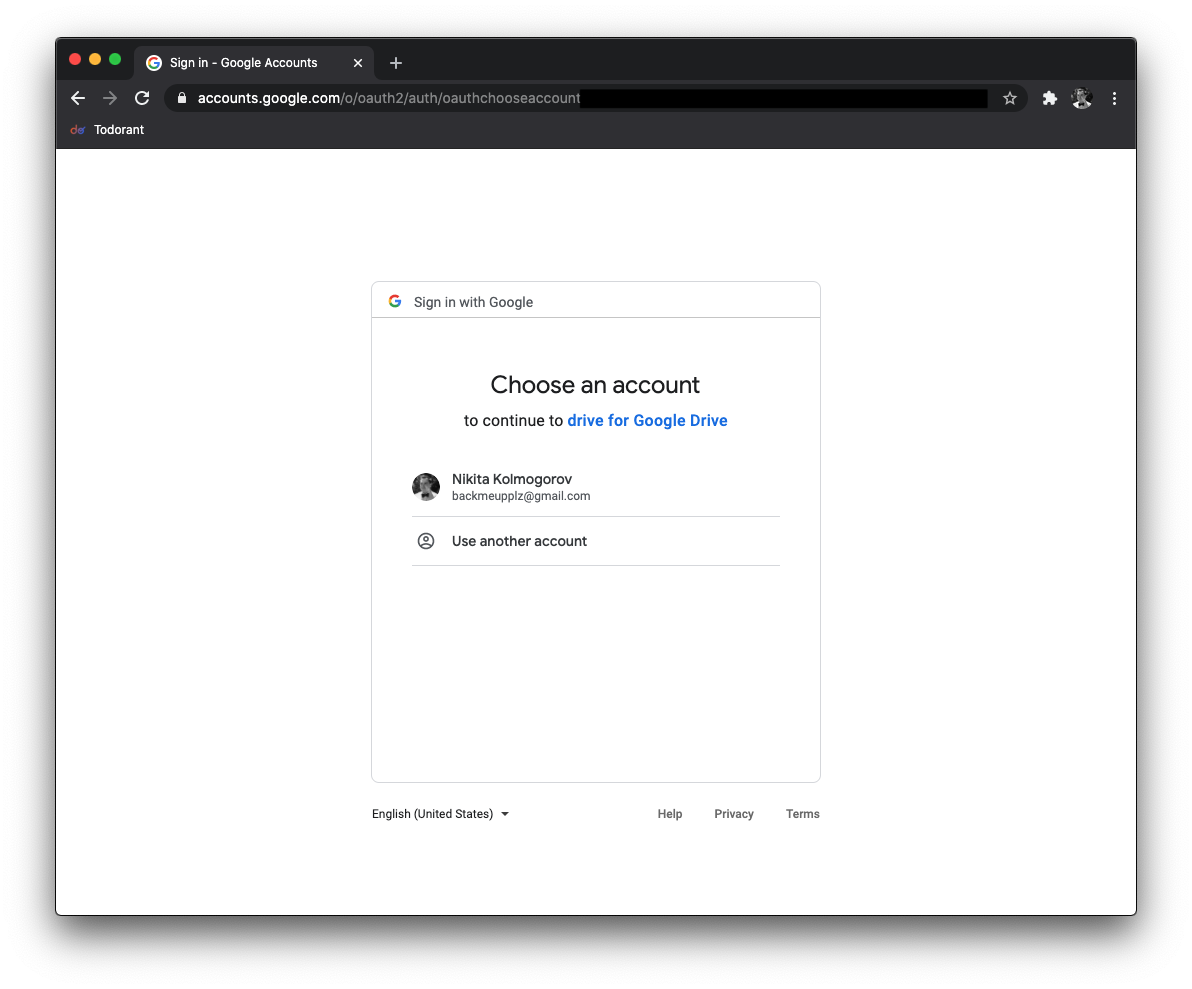

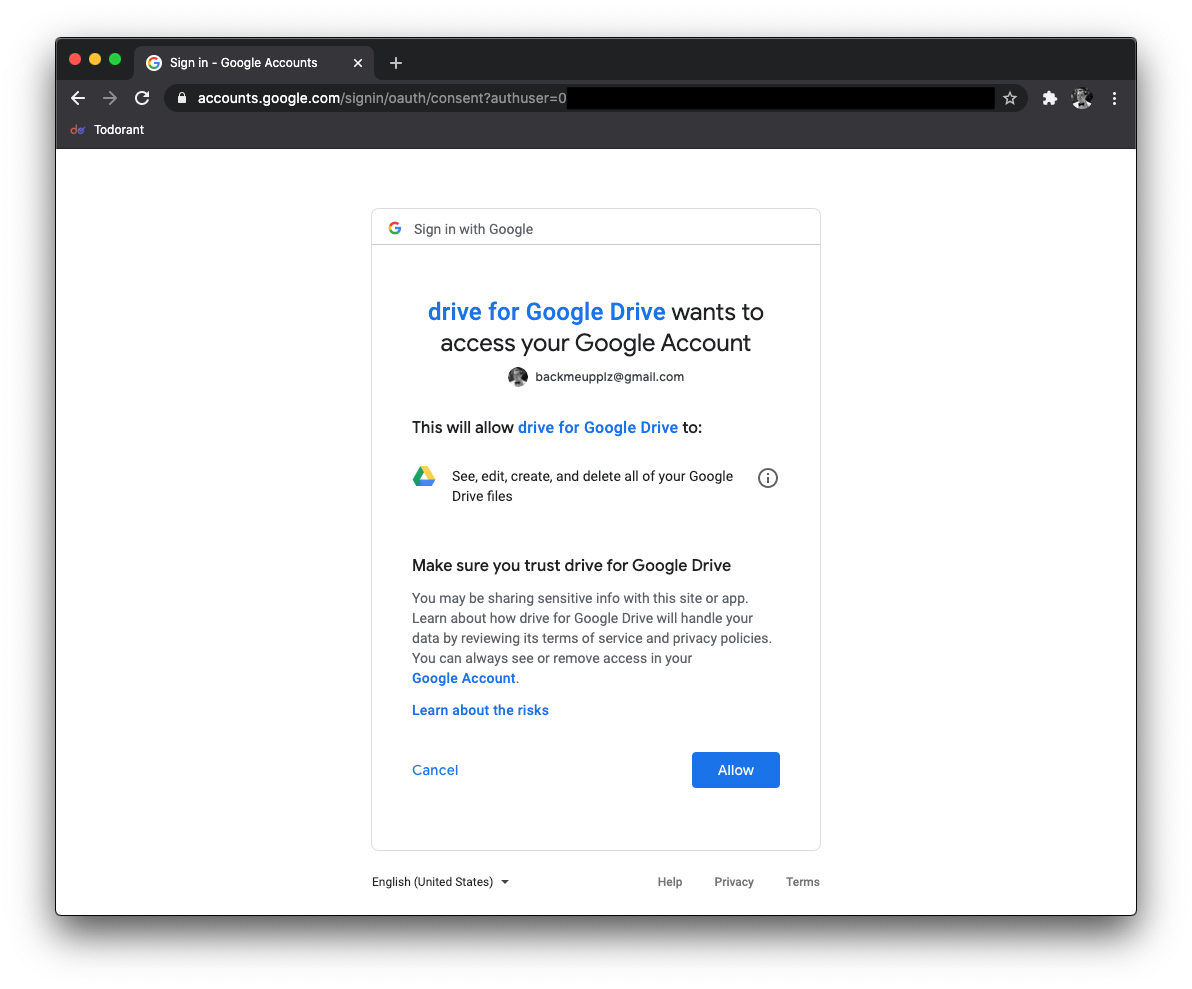

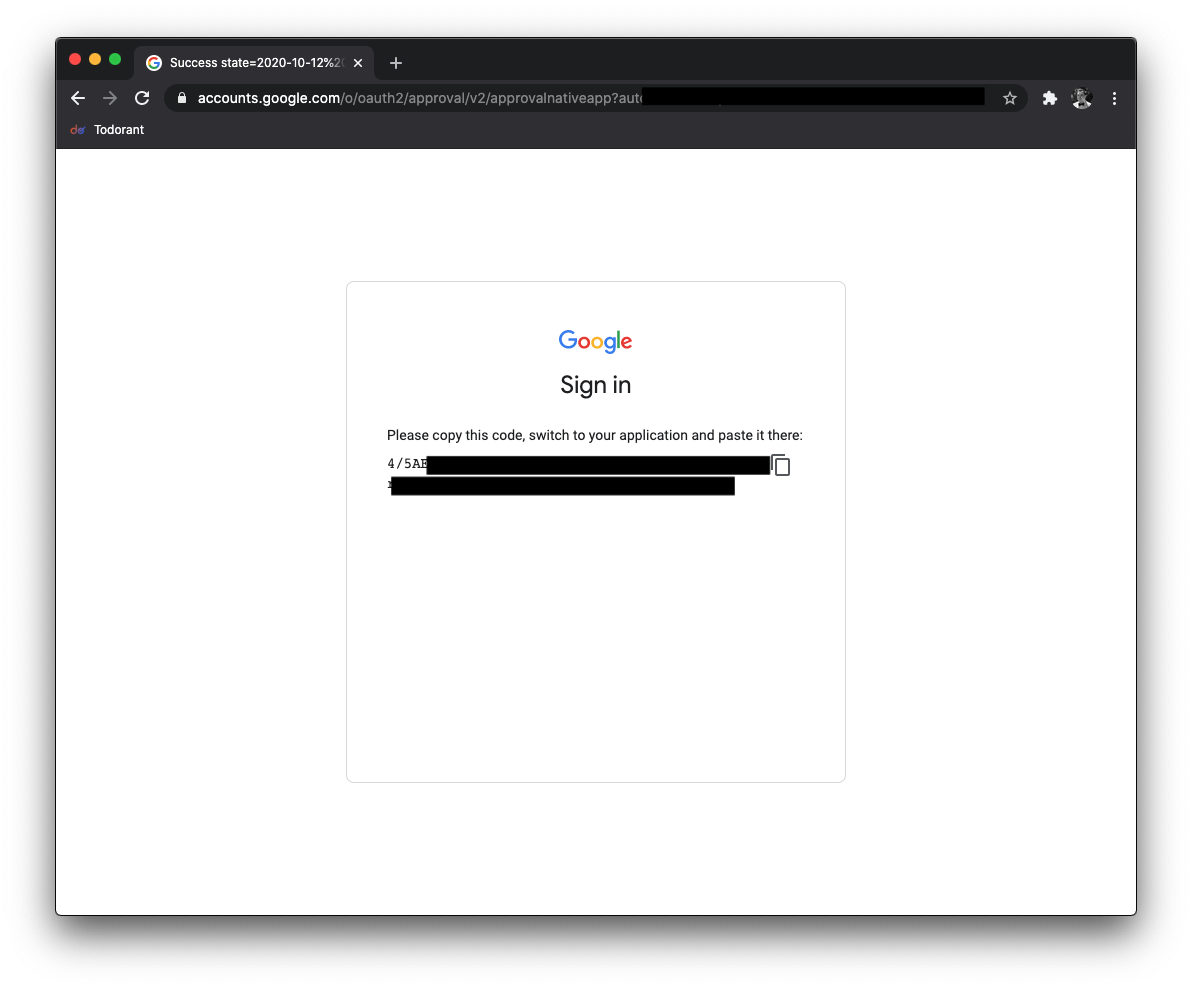

Then you'll need to authorize the drive to work in a specific folder. Create our main backup folder like mkdir ~/backups and then run gdrive init ~/backups, open the link in a browser, authorize the app and paste the authorization code.

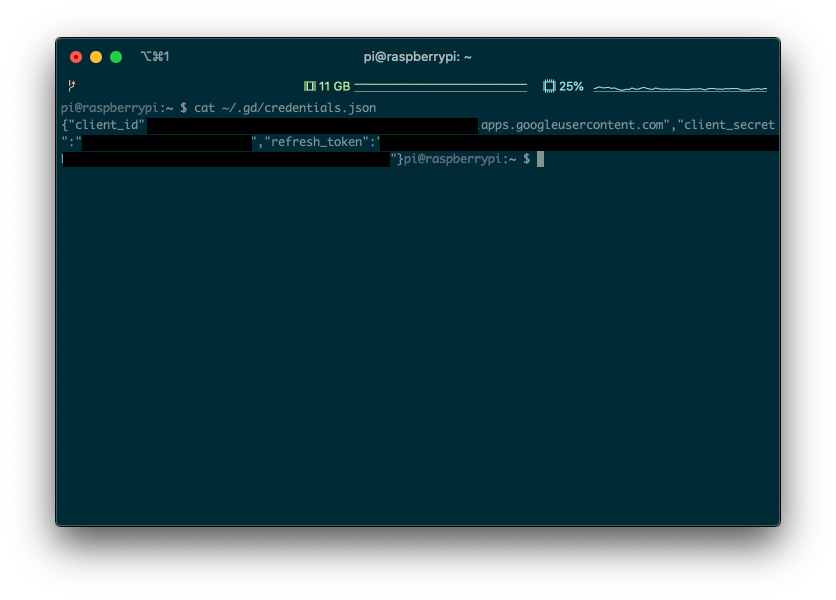

You can check if your credentials are saved by running the cat ~/.gd/credentials.json command.

Note: if you are using a different type of database, disregard everything up until the section "Uploading the database dump". Just make sure that you have a way to dump a backup from the database of your choice into a folder — then we will upload this folder to the Google Drive.

At first, I wanted to run mongodump directly on the Pi, but then I hit the wall: Raspberry Pi Zero W is a 32-bit architecture machine and Mongo doesn't support 32-bit since the version 3. Which means that we can only install MongoDB 2.4 at most on our Pi. So what should we do?

I thought that we could just download the database data folder to the local storage on Pi and then upload it to Google Drive. But then we would almost always get an invalid state backup as the data would keep changing (we cannot stop all writes to mongo db while doing hourly backups — users won't like it).

Then I thought of a brilliant solution. Let's make our server do mongorestore, make Pi download this data and upload it to Google Drive! Why do we need an external Pi then to upload the data to Google Drive and why won't we just do it on our server? It's simple: I refuse to install any extra overhead software to my production servers. Like the gdrive tool above.

Glad we got this out of the way.

Doing and downloading the backup

Disregard this section if you're not using MongoDB. Instead, you should write your own way to get the database dump folder.

We are going to use the mongodump over ssh to get the database dump on server and then the scp tool to download the dumg through ssh.

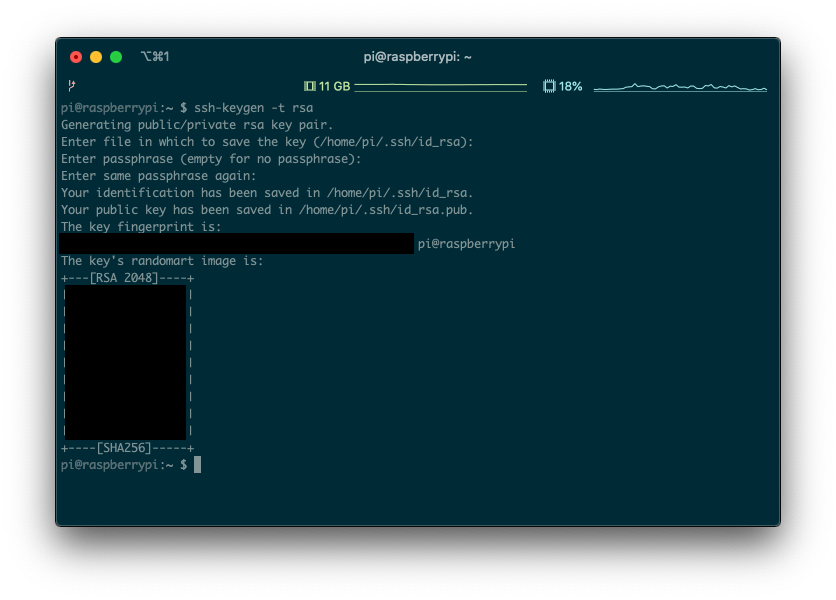

Generate an ssh key on your Pi by running this command: ssh-keygen -t rsa:

Take the contents of ~/.ssh/id_rsa.pub from the Pi and add them to the authorized_keys of the server running your MongoDB. You can find your authorized_keys file in the ~/.ssh folder of your server.

Great! Now you should be able to ssh from your pi to your MongoDB server! Try it out by running ssh your-user@your-server-ip-address on the Pi! Exit from the server by running the exit command. You should still be ssh'ed to the Pi.

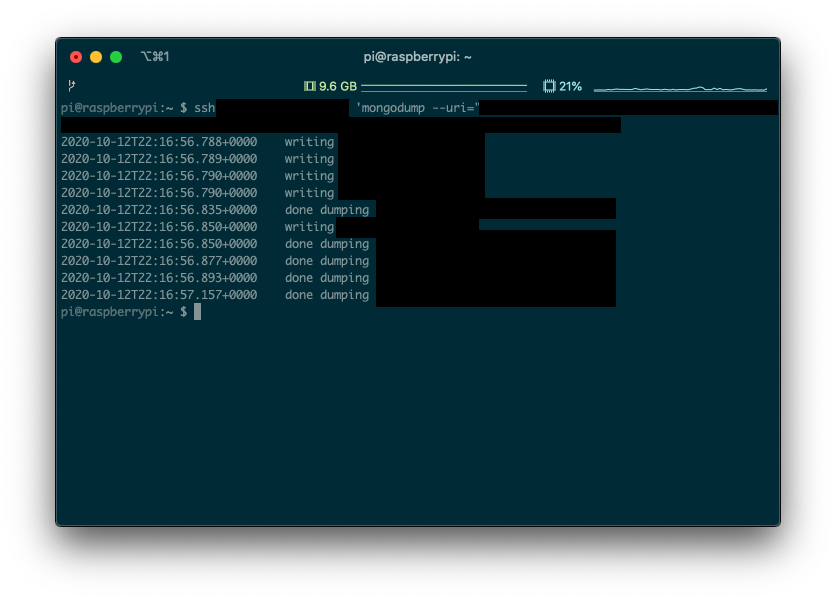

Try running the following command: ssh your_user@your_server_ip 'mongodump --uri="mongodb://username:password@localhost:27017/database" -o "/home/your_user/backups/"', obviously replacing the placeholders like your_user.

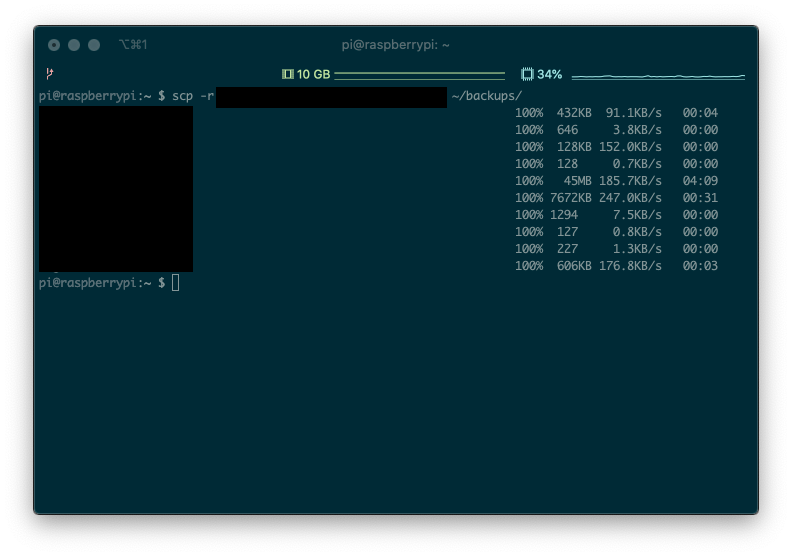

Yay! The database dump is ready to be downloaded! Let's try downloading it by running the following command: scp -r your_user@your_server_ip:/home/your_user/backups ~/backups/:

Nicely done! Now you have a dump of your database on the Pi ready to be uploaded to Google Drive!

Uploading the database dump

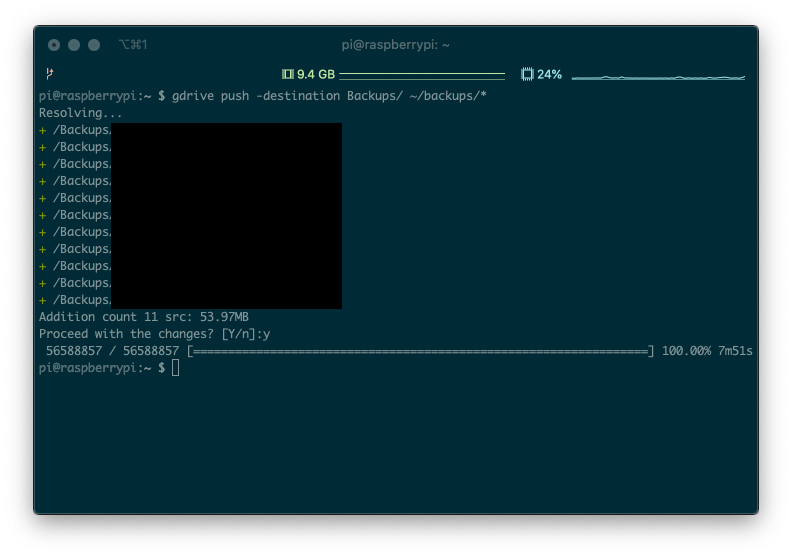

This is quite simple after we already set up the gdrive tool. Just create a Backups folder in your Google drive, run gdrive push -destination Backups/ ~/backups/* and observe the magic!

The backup script

Let's put everything we did before together and create a ~/backup.sh file with the following content replacing all the placeholders:

#!/bin/bash

# 1

timestamp=$(date +%s)

# 2

ssh your_user@your_server_ip 'mongodump --uri="mongodb://user:password@localhost:27017/database" -o "/home/your_user/backups/"' &&

mkdir -p "/home/pi/backups/$timestamp" &&

# 3

scp -r your_user@your_server_ip:/home/your_user/backups/* "/home/pi/backups/$timestamp" &&

# 4

ssh your_user@your_server_ip 'rm -r /home/your_user/backups' &&

# 5

gdrive new -folder "Backups/$timestamp" &&

# 6

gdrive push -quiet "/home/pi/backups/$timestamp" &&

# 7

rm -r /home/pi/backups/* &&

# 8

curl "https://api.telegram.org/bot123:ABCDE/sendMessage?chat_id=76104711&text=🥧 backed up"Don't forget to run sudo chmod +x ~/backup.sh to make sure this script can be executed. So what do we do here? Obviously, the very first line just tells various text editors like nano how to handle this file.

- Getting the timestamp variable

We will be saving our backups in the timestamped folders like Backups/1602463694 to keep track of the backup timestamps. So we are fetching the current timestamp (1602463694) here.

2. Actually performing the backup on server with mongodump.

3. Downloading this backup to the Pi.

4. Now we need to clean up so that we don't bloat the server with unnecessary backups. We already downloaded the backup in #3, why having it use the space on the server now?

5. For some reason, I had to create the timestamped folder on Google Drive prior to being able to "sync" it. It's not a huge deal, so I just rolled with it.

6. Actually syncing the backup folder with Google Drive! This might not work if you messed up the folder where you initialized gdrive above.

7. After we uploaded the backup to Google Drive, we'll just remove it from the Pi. No need to bloat this space either.

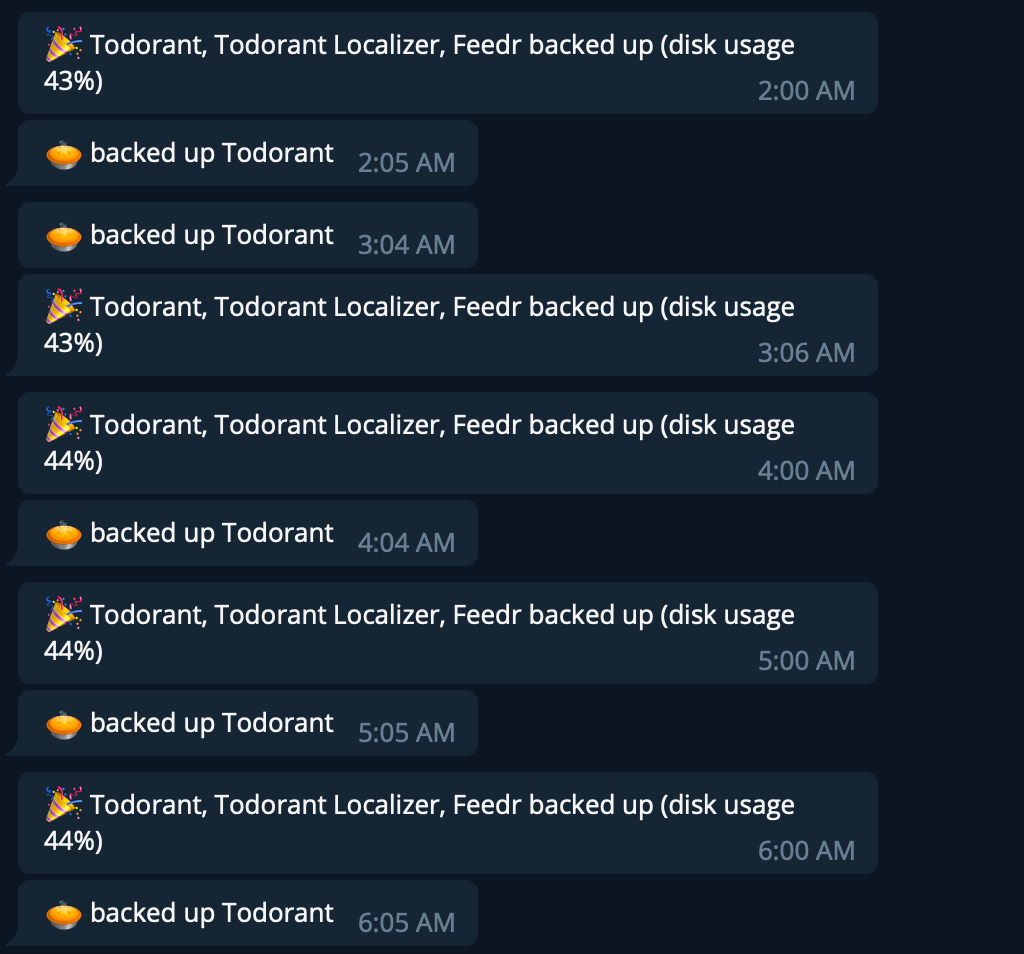

8. A nice touch is sending yourselves a message that the backup succeeded over Telegram. You will notice if backups didn't work and hopefully take actions.

Automate running the script

There are plenty of tutorials on the Internet on how to use cronjobs. Probably there is one for your distro as well! All in all, cron allows you to schedule running cronjobs repeatedly after a set time interval. In my case, I just back up hourly.

The syntax of time intervals in cron is a bit fancy, but you can use crontab generators like this one to simplify the process.

You can access the list of your cronjobs by running the command crontab -e. I appended this line to the file that showed up:

0 * * * * /home/pi/backup.sh

Conclusion

That's all folks! With around an hour of work you automated your database backups to be uploaded to Google Drive for perpetual storage by just using a $10 board. Now you can really feel like a cyberpunk hacker because wherever in range of your wifi you plug in this baby to power, it will obey the commands and backup whatever you told it to backup!

No longer you need to pay greedy backup providers $39/month to just run a bunch of scripts.

Keep in mind, that everything in this tutorial can be substituted for your favorite technologies and frameworks! Don't want to mess with Raspberry Pi's? You can do everything on any Linux macnine. Don't like MongoDB? Just use your own database tools to dump the data. Don't like Google Drive? Upload the backup anywhere you want. You can even use rclone instead of drive to upload almost to any cloud storage!

This is the power of free Internet. Harvest it.

P.S., you might need to add an Internet re-connection routine.